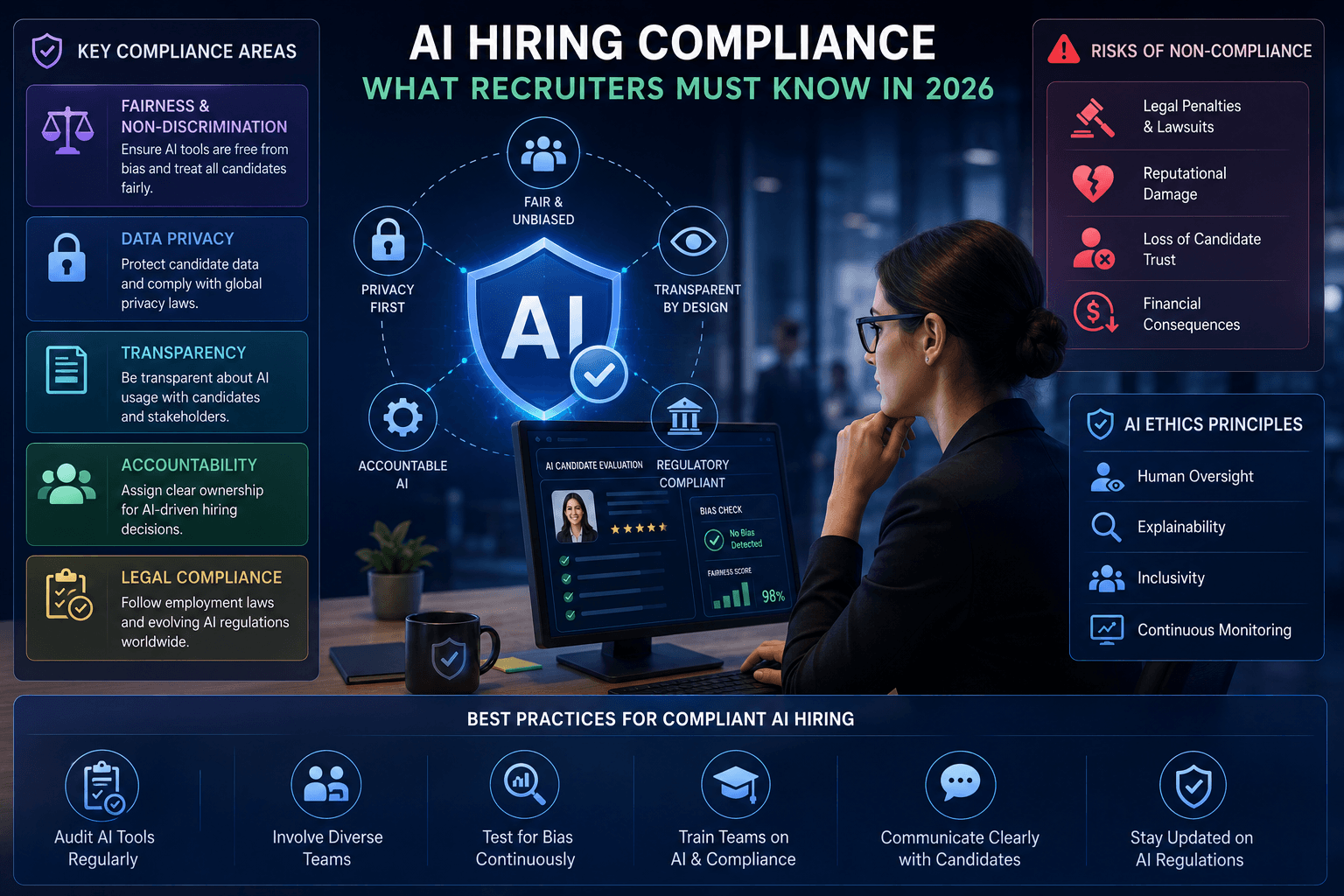

AI Hiring Compliance: What Recruiters Must Know in 2026

From the EU AI Act to state-level regulations, here's how new compliance laws are reshaping AI-powered recruitment

In 2026, AI-driven hiring is governed by stringent, multi-jurisdictional rules—most notably the EU AI Act (high-risk obligations), Colorado's AI Accountability Act, NYC Local Law 144 (annual independent bias audits), and expanding California transparency requirements. To comply, recruiters must ensure transparency, ongoing bias auditing, robust documentation, clear candidate notice/consent, and meaningful human oversight. This guide covers every requirement, how to audit your tools, and how to build a compliance-first hiring strategy.

The recruitment landscape is transforming at an unprecedented pace. Artificial intelligence has revolutionized how organizations identify, screen, and evaluate talent—enabling recruiters to process thousands of applications in seconds and identify top candidates with remarkable precision. Yet this technological revolution comes with a critical caveat: regulators worldwide are watching closely.

As we move deeper into 2026, the regulatory environment surrounding AI compliance in hiring has become mandatory, not optional. The European Union's AI Act has entered enforcement phases, Colorado's AI accountability law has taken effect, New York City's Local Law 144 now requires bias auditing for all AI hiring tools, and California continues to expand its AI regulations. For recruiters and talent acquisition leaders, understanding and implementing AI compliance isn't just about legal risk management—it's about building trust, protecting your brand, and ensuring fair opportunities for all candidates.

What Is AI Compliance in Hiring?

AI compliance in hiring refers to the practice of ensuring that artificial intelligence systems used in recruitment processes meet legal, regulatory, and ethical standards designed to protect candidates and prevent discrimination. At its core, AI compliance addresses a fundamental concern: recruitment algorithms can perpetuate and amplify human biases at scale, potentially discriminating against protected groups and limiting opportunities for qualified candidates. This is the crux of algorithmic bias in hiring, which compliance frameworks are designed to detect and mitigate.

Unlike traditional hiring bias, which affects individual hiring decisions, algorithmic bias can systematically exclude entire demographic groups from job opportunities. For example, if a machine learning model is trained on historical hiring data that reflects past discrimination, the algorithm learns to replicate those biased patterns. An AI system might favor male candidates in technical roles, discriminate against candidates with employment gaps, or penalize resumes with certain zip codes associated with specific racial demographics.

AI compliance frameworks require organizations to implement safeguards that prevent this type of systematic discrimination. These safeguards include transparency about how AI systems make decisions, regular auditing for bias, documentation of algorithmic decision-making, consent mechanisms for candidates, and corrective actions when bias is detected.

The Regulatory Landscape in 2026

The regulatory environment for AI compliance in hiring has matured significantly by 2026. Multiple jurisdictions have enacted comprehensive legislation, creating a complex but navigable landscape for global recruiters.

The European Union AI Act

The EU AI Act, which began enforcement in phases starting in 2024, represents the most comprehensive AI regulation globally. As of 2026, organizations using AI in hiring face strict requirements under the high-risk AI category, which explicitly includes AI systems for recruitment purposes. Under the EU AI Act, AI hiring tools must undergo rigorous conformity assessments before deployment. Organizations must maintain detailed documentation demonstrating that their systems meet fundamental rights and safety requirements. The legislation requires transparency—candidates must be informed when AI systems are making decisions that significantly affect them. Additionally, organizations must implement human oversight mechanisms and maintain audit trails for all algorithmic decisions. The penalties for non-compliance are substantial: fines up to 6% of global annual revenue or €30 million, whichever is higher. For EU AI Act hiring obligations, these high-risk requirements establish the baseline that recruiters and vendors must plan for.

The Colorado AI Accountability Act

Colorado's AI Accountability Law, which became effective in January 2024 and has matured through 2026, requires organizations to use AI systems responsibly and transparently. In the hiring context, this law mandates that organizations can explain the functionality and performance of AI systems they deploy. The Colorado law specifically requires impact assessments before deploying high-risk AI systems, which includes recruitment algorithms. Organizations must maintain documentation of these assessments and provide candidates with opportunities to request human review of algorithmic decisions.

New York City Local Law 144

NYC Local Law 144, which took effect in 2024, specifically targets AI hiring tools. The law requires that any automated employment decision system—defined as technology used to screen, evaluate, or rank candidates—must undergo independent bias auditing before deployment and on an annual basis thereafter. For recruiters in New York City, this means that if you're using any form of AI screening, resume ranking, or predictive analytics for hiring, you must commission annual third-party bias audits by approved auditors. The audits must assess whether the system has a disparate impact on protected classes. Organizations that fail to comply face penalties up to $1,000 per violation.

California AI Hiring Regulations

California has been proactive in AI regulation, with multiple laws affecting hiring. The state's approach focuses on transparency and algorithmic accountability. California regulations require that organizations disclose when they're using AI in hiring decisions and provide candidates with meaningful information about how algorithms assess their qualifications. Additionally, California's consumer protection laws now extend to job candidates, treating candidates as "consumers" of hiring processes.

Why AI Compliance Matters for Recruiters

Beyond legal obligation, AI compliance in hiring addresses several critical business and ethical imperatives.

Risk Mitigation and Legal Protection

The financial and reputational costs of non-compliance are steep. In 2025, one major technology company faced a $25 million settlement related to alleged gender discrimination in its recruiting algorithm. Another company incurred over $5 million in direct costs addressing algorithmic bias in hiring. By implementing AI compliance measures, recruiters substantially reduce legal exposure. Documented compliance efforts, regular auditing, and evidence of good-faith attempts to prevent bias provide defensible positions if legal challenges arise.

Access to Top Talent and Employer Brand

Candidates increasingly care about how they're evaluated. A 2025 global survey found that 68% of job candidates want to know if AI is being used to screen their applications, and 54% would be less likely to apply for a position if they knew AI with known bias issues was being used to evaluate them. Organizations with demonstrable commitment to fair AI hiring practices attract more qualified candidates and enjoy stronger employer brand perception.

Operational Efficiency and Accuracy

Properly audited and compliant AI hiring systems actually perform better than unaudited ones. Bias in recruitment algorithms often reflects historical data problems, not actual predictive power. By removing bias, organizations often improve the quality of their hiring decisions. Compliant systems make better predictions about job performance because they're based on job-relevant factors rather than demographic proxies.

Key Compliance Requirements for AI Hiring Tools

Effective AI compliance in hiring requires attention to multiple dimensions of algorithmic governance.

Transparency and Explainability

Candidates and hiring teams must understand how AI systems make decisions. This doesn't necessarily mean disclosing proprietary algorithms—vendors can protect trade secrets—but it does mean explaining the factors that influence hiring decisions at a level candidates can understand. Explainability also serves internal compliance. Your hiring teams must understand what the AI system is actually doing. Too many organizations deploy recruitment technology without fully understanding its mechanics, making it impossible to identify or address bias issues.

Bias Auditing and Validation

Compliance requires systematic testing for bias across protected classes. These aren't one-time audits—they're ongoing processes that occur at multiple points: before deployment, periodically during operation (usually annually at minimum), and whenever significant changes are made to the system. Bias audits assess whether the AI system exhibits disparate impact—meaning it produces systematically different outcomes for different protected groups. Auditors look at multiple dimensions: selection rates across protected groups, hiring rates by demographic category, time-to-hire differences between groups, and qualitative assessments of whether outcomes align with legitimate job-related criteria.

Documentation and Record Keeping

Regulatory compliance requires comprehensive documentation. This includes technical specifications of the AI system, training data sources and composition, performance metrics, audit reports, any complaints or concerns raised about the system, and modifications made in response to identified issues. Documentation demonstrates good-faith compliance efforts to regulators and creates accountability within the organization for AI hiring decisions.

Consent and Candidate Notification

Multiple regulations require that candidates know when AI is being used to evaluate them. This transparency requirement means organizations must disclose AI use in job postings, during the application process, or before making decisions based on algorithmic assessments. The level of detail required varies by jurisdiction, but best practice is always full transparency.

Human Oversight and Review

Compliance frameworks universally require human involvement in hiring decisions. Humans must be in the loop—meaning hiring managers review and validate AI recommendations before making final decisions. This isn't just a compliance requirement—it's a practical safeguard. Humans can recognize when algorithmic recommendations conflict with actual job requirements or when edge cases require contextualization.

How to Audit Your AI Recruitment Tools for Bias

Implementing bias audits is a core compliance responsibility. Start by cataloging every AI or algorithmic tool involved in your hiring process. This includes obvious systems like applicant tracking system algorithms, resume screening tools, and video interview analysis platforms. It also includes less obvious applications like job description generators and ranking tools. Under most regulations, if a tool makes any decision that influences hiring outcomes, it requires auditing.

Assess the risk level of each tool. A system that provides suggestions to human recruiters who can easily override them poses lower risk than a system making binary accept/reject decisions with minimal human review. High-risk systems require more frequent and rigorous audits.

Bias audits employ several methodologies. Statistical parity analysis compares selection rates across demographic groups. Impact ratio analysis uses the four-fifths rule—examining whether selection rates for protected groups are at least 80% of selection rates for the most-selected group. Regression analysis identifies whether protected characteristics correlate with outcomes after controlling for job-relevant factors. Many organizations work with specialized bias audit firms that bring expertise and independence.

If audits identify bias, corrective actions are necessary. These might include reweighting algorithm parameters, removing proxies that correlate with protected characteristics, requiring human review for edge cases, or replacing the system entirely. Documentation of corrective actions is critical—regulators want to see not just that bias was identified, but that organizations took concrete steps to address it.

Building a Compliance-First AI Hiring Strategy

Moving beyond audit and into proactive compliance requires systematic strategic planning. A mature AI hiring compliance program ties these elements together across people, process, and technology.

Establish clear governance structures with defined roles and responsibilities for AI hiring compliance. This typically includes a compliance owner or team, AI audit coordinators who oversee bias testing, hiring stakeholders who understand compliance requirements, and technology partners who ensure systems meet compliance standards.

If you're using third-party recruitment technology, your contracts should explicitly address compliance. Require vendors to certify that their systems have been tested for bias. Request copies of audit reports. Include contractual obligations that vendors maintain compliance and provide updates when bias is identified.

Your hiring teams must understand compliance requirements through proper training. Training should cover basic concepts of algorithmic bias, the organization's specific compliance obligations, how to identify when AI tools might be producing biased outcomes, and processes for raising concerns.

Develop clear communications for candidates about AI use in hiring. These communications should explain what AI tools are used, what factors they consider, how candidates can request human review, and how candidates can appeal decisions they believe are unfair.

How TheHireHub.ai Ensures Compliant AI Recruitment

TheHireHub.ai was built from the ground up with AI compliance as a foundational principle, not a secondary feature. The platform incorporates several key compliance mechanisms that address the requirements outlined above.

First, TheHireHub.ai provides complete transparency about algorithmic decision-making. The platform explains to candidates which AI systems are involved in evaluation and what factors those systems consider. Hiring teams can see detailed explanations for every algorithmic recommendation.

Second, TheHireHub.ai conducts regular bias audits and provides audit reports directly to users. The platform tracks demographic data across hiring processes and runs statistical analyses to identify whether disparate impact exists. When bias is detected, TheHireHub.ai alerts users and recommends corrective actions.

Third, the platform maintains comprehensive documentation of all algorithmic decisions and audit results. This documentation is automatically generated and organized, making it easy to demonstrate compliance to regulators if needed.

Fourth, TheHireHub.ai ensures human oversight through its workflow design. While the platform uses AI to process applications and surface top candidates, final hiring decisions remain with human hiring managers. By choosing a platform like TheHireHub.ai that prioritizes compliance, organizations can ensure they're building hiring processes that are fair, transparent, and legally defensible.

Conclusion

AI compliance in hiring is no longer a nice-to-have or a peripheral concern—it's a fundamental requirement for responsible talent acquisition in 2026. The regulatory landscape, while complex, is becoming increasingly clear and consistent across jurisdictions. Organizations that get ahead of compliance requirements now will enjoy competitive advantages in terms of reduced legal risk, stronger employer brands, and actually improved hiring quality.

The path to compliance involves understanding your regulatory obligations in the jurisdictions where you operate, auditing your existing systems for bias, implementing documented corrective actions, and building governance structures that maintain compliance over time. Technology platforms like TheHireHub.ai can substantially ease this journey by providing compliance mechanisms built into core functionality rather than bolted on afterward. Strong AI hiring compliance practices turn regulation into an engine for better outcomes.

The organizations that embrace AI compliance won't just be protecting themselves legally—they'll be building recruiting processes that are fairer, more transparent, and ultimately more effective at identifying truly qualified candidates. In an increasingly talent-competitive market, that's a competitive advantage worth pursuing.

Sources & References

1. European Commission — "Artificial Intelligence Act" (digital-strategy.ec.europa.eu) | 2. Colorado General Assembly — "Colorado Artificial Intelligence Accountability Act" (leg.colorado.gov) | 3. New York City Council — "Local Law 144: Automated Employment Decision Systems" (legistar.council.nyc.gov) | 4. California State Legislature — "California Artificial Intelligence Transparency Act" (leginfo.legislature.ca.gov) | 5. U.S. Equal Employment Opportunity Commission — "Guidance on AI in Hiring" (eeoc.gov) | 6. Society for Human Resource Management — "2025 Candidate Preferences Survey" (shrm.org) | 7. AI Now Institute — "Algorithmic Bias in Employment: Annual Report" (ainowinstitute.org) | 8. Harvard Business School — "The Business Case for AI Ethics in Recruitment" (hbswk.hbs.edu)

Stop scrolling resumes. Start hiring.

TheHireHub's AI hands you pre-screened, ready-to-interview candidates in hours, no agency fees, no signup gauntlet.

Frequently Asked Questions

What are the key AI hiring laws recruiters must follow in 2026, and how do their requirements differ?

Four regimes dominate. Under the EU AI Act, recruitment AI is "high-risk," requiring pre-deployment conformity assessments, detailed documentation, transparency to candidates, human oversight, and audit trails, with penalties up to 6% of global revenue or €30 million. Colorado's AI Accountability Act mandates explainability and documented impact assessments before deploying high-risk systems and gives candidates the ability to request human review. NYC Local Law 144 requires independent third-party bias audits of automated employment decision tools before use and annually, focusing on disparate impact, with penalties up to $1,000 per violation. California emphasizes transparency and algorithmic accountability, requiring disclosure of AI use and meaningful information about how algorithms assess qualifications, and extends consumer-style protections to candidates.

Which tools in my hiring workflow actually trigger compliance duties like audits and notices?

Any AI or algorithmic tool that makes or materially influences hiring outcomes can trigger compliance. That includes ATS scoring/ranking features, resume screening tools, video interview analysis platforms, job description generators, and candidate ranking or recommendation engines. NYC explicitly covers "automated employment decision systems" used to screen, evaluate, or rank candidates. As a rule of thumb, if a system affects candidate progression or decisions, treat it as in-scope. Tools that make binary accept/reject calls with minimal human review are higher risk and require more rigorous, frequent audits than advisory tools that recruiters can easily override.

What does a compliant bias audit include, and how often should I run it?

Conduct audits before deployment, at least annually during use, and whenever you make significant changes. A robust audit inventories all AI tools, assesses their risk, and tests for disparate impact using methods such as statistical parity analyses, the four-fifths (80%) impact ratio, and regression analyses that control for job-relevant factors. Auditors examine selection and hiring rates by protected group, time-to-hire differences, and whether outcomes align with legitimate job criteria. Independent third-party audits are required in NYC. If bias is found, document and implement remediations (e.g., reweight features, remove proxy variables, add human review for edge cases, or replace the system) and keep clear records of all changes.

What documentation and candidate notices do regulators expect?

Maintain comprehensive records: technical specifications, training data sources and composition, performance metrics, audit reports, complaints or concerns, and all modifications with rationales. Provide clear candidate notice that AI is used, explain the key factors the system considers at an understandable level, and secure consent where required. Offer meaningful human oversight—candidates should be able to request human review and appeal decisions they believe are unfair. Jurisdictional details vary, but best practice is transparent disclosure in job materials and during the application process or before making AI-influenced decisions.

How do I operationalize a compliance-first AI hiring program—and how can TheHireHub.ai help?

Build governance with defined roles (compliance owner, audit coordinators, trained hiring stakeholders, and technology partners), require vendor accountability in contracts (bias-testing certifications, audit reports, and update obligations), train recruiters and hiring managers on bias and obligations, and publish clear candidate communications. Keep humans in the loop for final decisions and document corrective actions when issues arise. Platforms like TheHireHub.ai streamline this by embedding transparency (explanations for candidates and teams), regular bias auditing with alerts and recommended remediations, comprehensive auto-generated documentation and audit trails, and workflows that ensure human-in-the-loop oversight.